Graylog with openSUSE

Going through logs is a necessary evil in this ever evolving threat landscape, the amount of things happening every day with various intrusion attempts, phishing, malware and other threats are putting everyone at greater risks.

Even for home users being able to read and collect logs is becoming a important factor in my eyes as more technology enters our home.

We are filling our homes with IoT devices like fridges, washers and other various automated hardware without knowing where and what they are talking to and many manufacturers simply do not care about home users security.

Letting all of these IoT devices to roam free and talk to everything is a big mistake.

It is important to get an overview of what every IoT or home servers are doing in the network and what they want to access on the internet, one key component to this is to use a central logging tool to collect logs from different sources in one GUI for searchability, another is a well configured firewall and network on top of this that has central logging attached.

This is a more technical guide aimed towards the more advanced home users and professionals, but then again there is nothing wrong with learning how to collect logs and learning log management.

Requirements

A physical or virtual machine with Host CPU support for AVX, read more about it here and a lot of space for log data, the amount of space depends on how much logs you are collecting, i have 100GB+.

A already installed openSUSE LEAP with at least 4GB memory, static IP and internet access is needed with SSH access. (not covered in this how-to).

As of writing this how-to i used openSUSE LEAP version 15.5, OpenSearch 2.11.x and Graylog Open version 5.2.x.

IPv6

If you are not running in a IPv6 environment i recommend that you disable this in openSUSE, it can interfere with some services that will only listen on IPv6 and not on IPv4 when active.

To disable IPv6 on openSUSE do the following then restart the server before continuing.

Edit /etc/sysctl.conf

Add the following lines at the end of the file and save.

net.ipv6.conf.all.disable_ipv6 = 1

net.ipv6.conf.default.disable_ipv6 = 1

net.ipv6.conf.lo.disable_ipv6 = 1

Reboot.

Now you can continue...

Installation

Graylog uses MongoDB for data storage and OpenSearch as data node, so we will start by setting these up first.

MongoDB

As said above newer version of MongoDB requires a CPU configuration flag called AVX by default, there is an option to compile it without it, but that is not covered in this how-to, here we will use the default AVX enabled package.

To see if you have AVX support run the following command, if it returns nothing you do not have support for AVX or have configured your virtual machine with wrong CPU option, be sure to check how to set it up for your virtualization software so that it uses the Host CPU directly instead of emulating a different CPU.

You should see either avx or avx2 among the long line of output strings.

Install

Import MongoDB GPG key.

Add repository.

sudo zypper addrepo --gpgcheck "https://repo.mongodb.org/zypper/suse/15/mongodb-org/6.0/x86_64/" mongodb

Update repositories.

Install MongoDB.

Enable and start MongoDB.

Configure

Connect to MongoDB and add user and add admin DB.

Add admin DB.

Paste the following into the mongo shell and push enter to run, substitute YourPassword with your own password first.

You should get an ok back like this if it succeeded.

Exit mongodb shell.

Time to add graylog DB, this is done with the root user we just created.

Add graylog DB.

Paste the following into the mongo shell and push enter to run, substitute, the following first YourGraylogUser and YourPassword with your own user and password first, and save the user and password for later to, they will be added to graylog configuration.

And you should get an ok back like this if it succeeded.

Exit mongodb shell.

Now we secured MongoDB and added the graylog DB, time to move on.

OpenSearch

Graylog can use both Elasticsearch and OpenSearch but it seems they are moving away from Elasticsearch due to some licensing changes, so we will use OpenSearch for this installation.

Install

Import GPG key.

Add repository configuration.

sudo curl -SL https://artifacts.opensearch.org/releases/bundle/opensearch/2.x/opensearch-2.x.repo -o /etc/zypp/repos.d/opensearch-2.x.repo

Update repositories.

Install openSearch.

Configure

Edit opensearch.yml.

Change the following lines and remove # if they are commented out, name you cluster and set listening IP to 127.0.0.1 and port to 9200

Remove the lines between these including these lines to remove demo settings.

######## Start OpenSearch Security Demo Configuration ########

...

######## End OpenSearch Security Demo Configuration ########

Add the following lines at the end to disable some setting required for graylog and since we are running it in a single machine configuration, we set it as that to and then save the file.

# Graylog Settings

action.auto_create_index: false

plugins.security.disabled: true

discovery.type: single-node

There are an issue with a service file created under /etc/init.d/ that interferes with enabling the service, rename it as follows and you should not have any issues enabling the service.

Enable OpenSearch service.

Reload service daemon.

Start openSearch service.

Graylog

Time to install graylog and configure it.

Install

Import GPG key, this can take a couple of minutes, just wait it out.

Add the repository configuration.

sudo zypper addrepo --gpgcheck --refresh 'https://packages.graylog2.org/repo/el/stable/5.0/$basearch' 'graylog'

As writing this how-to there is an issue with the repomd.xml file in that it is unsigned, hopefully the will resolve this in the near future so we do not get this error and all files are signed.

This is the message you will get and to be able to install graylog you need to write yes to continue.

Warning: File 'repomd.xml' from repository 'graylog' is unsigned.

Note: Signing data enables the recipient to verify that no modifications

occurred after the data were signed. Accepting data with no, wrong or unknown

signature can lead to a corrupted system and in

extreme cases even to a system compromise.

Note: File 'repomd.xml' is the repositories master index file.

It ensures the integrity of the whole repo.

Warning: We can't verify that no one meddled with this file, so it might

not be trustworthy anymore! You should not continue unless you know it's safe.

File 'repomd.xml' from repository 'graylog' is unsigned, continue? [yes/no] (no):

Install graylog.

Configure

Create a unique key for your install, this one goes into password_secret, copy the output to a text document.

Create a unique password for your admin user, this one goes into root_password_sha2, copy the output of this to a text document to.

Edit Graylog configuration and add the key and password to the file.

Add the 64 character hash we created earlier, make sure it is on one line.

# Changing this value after installation will render all user sessions and encrypted values in the database invalid. (e.g. encrypted access tokens)

password_secret = your64characterkeyhere

Set the default root username, recommended not to use default.

Add your sha2 password we generated earlier.

# Create one by using for example: echo -n yourpassword | shasum -a 256

# and put the resulting hash value into the following line

root_password_sha2 = yourshapasswordkey

Add the elasticsearch_hosts address to make graylog look for OpenSearch on a specific address and port, in this case the localhost address and port 9200, and yes it is called elasticsearch_hosts even if we are running with OpenSearch, but that will most likely change in the near future.

# List of Elasticsearch hosts Graylog should connect to.

...

#elasticsearch_hosts = http://node1:9200,http://user:password@node2:19200

elasticsearch_hosts = http://127.0.0.1:9200

Edit following line and add your db user and password that we set up earlier when installing MongoDB, save the file.

# Authenticate against the MongoDB server

# '+'-signs in the username or password need to be replaced by '%2B'

mongodb_uri = mongodb://YourGraylogUser:YourPassword@localhost:27017/graylog

Save the configuration file.

Time to enable the service, and here you have the same issue as with OpenSearch, regarding a service file not working under /etc/init.d/ rename it as follows and you should not have any issues enabling the service.

Enable graylog service.

Reload service daemon.

Start graylog service.

nginx

To expose graylog GUI we will use nginx since it it easier to configure SSL support with nginx than it is with graylog.

Install

Install nginx

Configure

Make a cert directory under /etc/nginx.

In this guide we will create a self signed certificate, but if you have the option, a real world valid certificate is the way to go.

Create the self signed certificate, enter your information on the questions.

sudo openssl req -x509 -nodes -days 365 -newkey rsa:2048 -keyout /etc/nginx/cert/private.key -out /etc/nginx/cert/certificate.crt

These are the questions you get, since it is a self signed certificate only existing on this machine the one important question to answer is Common Name, if you are using your own certificate authority or a real certificate most of the questions needs information, Common Name must be a name not an IP, even if you end up accessing the server via IP, values within [] are default values so set those lines to if you want it to be more clear and not have the default values.

Country Name (2 letter code) [AU]:

State or Province Name (full name) [Some-State]:

Locality Name (eg, city) []:

Organization Name (eg, company) [Internet Widgits Pty Ltd]:

Organizational Unit Name (eg, section) []:

Common Name (e.g. server FQDN or YOUR name) []:

Email Address []:

Create and edit a file called proxy.conf for nginx, this is the one that will tell where nginx should fetch graylog GUI from internally on the server and present it to you.

Add the following information to the file, replace www.example.org with your server name that matches what your certificates are set to handle, the Common Name when we created the self signed certificate and save the file.

server

{

listen 443 ssl http2;

server_name www.example.org;

ssl_certificate /etc/nginx/cert/certificate.crt;

ssl_certificate_key /etc/nginx/cert/private.key;

ssl_protocols TLSv1 TLSv1.1 TLSv1.2;

ssl_ciphers HIGH:!aNULL:!MD5;

location /

{

proxy_set_header Host $http_host;

proxy_set_header X-Forwarded-Host $host;

proxy_set_header X-Forwarded-Server $host;

proxy_set_header X-Forwarded-For $proxy_add_x_forwarded_for;

proxy_set_header X-Graylog-Server-URL https://$server_name/;

proxy_pass http://127.0.0.1:9000;

}

}

Enable nginx service.

Reload service daemon.

Start nginx service.

Firewall

Now we are almost done with the server installation, since openSUSE has an active firewall by default we must open port 443 for incoming requests.

To see what active zone that is configured for filtering incoming request run the following command.

Result:

As you can see i have public as default, but i rather have dmz since i like it locked down even more, but if thats fine to you just use that zone name when adding your rules.

So to change that run the following command.

To see what zone is active on your network card run the following.

It should present your network card under the public zone, to change zone for your network card run the following command, substitute eth0 with your network card name and ignore the Docker part.

The dmz zone has already a allow rule for SSH and you should not notice the change from your side when changing default zone in this case.

To open up for incoming requests we will create a new rule file for nginx.

Add the following information and save.

<?xml version="1.0" encoding="utf-8"?>

<service>

<short>nginx Server</short>

<description>nginx Server ports</description>

<port port="443" protocol="tcp"/>

</service>

Reload firewall

Allow nginx through the firewall.

And reload the firewall again.

Now you should be able to access the GUI via a browser with the name you set to the self signed certificate if you have some local lookup or via IP.

so if your server has logserver as name and the following IP: 10.10.10.10, it would look something like this when trying to connect.

If you cannot reach the server after 10:ish minutes reboot it, have seen issues with the firewall not understanding that it needs to let the traffic through until a complete restart of the server.

You will get a warning about invalid certificate since it is self signed...

Logs

To finalize tha basics for log gathering we need to setup so called inputs in graylog and configure clients to send data.

Graylog

When you log in to graylog for the first time it can be a bit confusing where to start, we will start with setting up two listeners for log gathering to see how that is done.

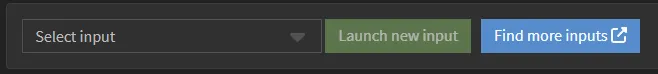

Go to System / Inputs, here you will be presented with option to configure various inputs, we will add two in syslog format as a start.

Start by choosing Syslog TCP in the list and then click on Launch new input to start configure it.

Set at least the following parameters and then click Launch input

- Title (Ex. SysLogTCP)

- Bind address (Your server IP, not 127.0.0.1)

- port (set to 5140, 514 is already occupied)

- Store full message

The option Store full message is good to have to keep the full message since graylog tries to split the incoming log message to different chunks but if it do not get it right it is good to see the full message to determine what is missed, note that this increases the amount of data stored.

Now add a new input but this time choose Syslog UDP and fill in same as above except name, here you can use SysLogUDP unless you want something different than my examples, just not as same as the other one, keep the names unique.

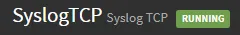

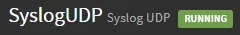

Now you should have two log listeners running.

Now to finalize the log gathering on the server side we must open for incoming logs in the firewall.

Start by creating a firewall service file.

Add the following information and save, this will open ports for the log listeners we just created above.

<?xml version="1.0" encoding="utf-8"?>

<service>

<short>Graylog Log Input</short>

<description>Ports for incoming logs</description>

<port port="5140" protocol="tcp"/>

<port port="5140" protocol="udp"/>

</service>

Reload firewall

Allow nginx through the firewall.

And reload the firewall again.

When expanding with new log inputs with new ports edit above file and add a new line for port and protocol and do a firewall reload to activate the changes.

Clients

Depending on the client there are several ways of configuring log sending, i will show some examples to get you started.

I recommend that you read up on how each device you want to collect logs for work and in which format they are sending it in, graylog can receive logs in many formats, just take a look where we configured our syslog inputs earlier.

I will be using the ip 10.10.10.10 in my examples but should be substituted for your log server IP and i will use Ubuntu and openSUSE in these examples as well but any linux distribution using rsyslog should be similar in configuration.

Ubuntu

Ubuntu uses syslog format via rsyslog in combination with journald and this is how to configure it.

Edit rsyslog.

Add this after the first set of comments.

*.* action(type="omfwd" target="10.10.10.10" port="5140" protocol="tcp"

action.resumeRetryCount="100"

queue.type="linkedList" queue.size="10000")

Restart rsyslog.

Edit Journald to forward to rsyslog.

Uncomment following line and change to yes and then save.

Restart Journald

openSUSE

openSUSE is using rsyslog as default on LEAP and i think they are using journald on Tumbleweed as of writing this, and here we configure it on LEAP.

Edit remote.conf

Here there are more options to configure since we need to tell it to keep the log files local if contact with log server is temporarily unavailable.

# ######### Enable On-Disk queues for remote logging ##########

#

# An on-disk queue is created for this action. If the remote host is

# down, messages are spooled to disk and sent when it is up again.

#

$WorkDirectory /var/spool/rsyslog # where to place spool files

$ActionQueueFileName tmplog_ # unique name prefix for spool files

$ActionQueueMaxDiskSpace 1g # 1gb space limit (use as much as possible)

$ActionQueueSaveOnShutdown on # save messages to disk on shutdown

$ActionQueueType LinkedList # run asynchronously

$ActionResumeRetryCount -1 # infinite retries if host is down

*.* action(type="omfwd" target="10.10.10.10" port="5140" protocol="tcp"

action.resumeRetryCount="100"

queue.type="linkedList" queue.size="10000")

Restart rsyslog

Now you seen some samples on how to configure some variations of clients, some hardware appliances like firewall, switches and so on often has limited configuration, where you only can tell it where to send the logs and not in what format but many of them use the syslog format and should be easy to configure and connect.Memory tweaking

When using more than 4GB of system memory you need to tweak how much memory OpenSearch and Graylog uses, they have a low static setting on how much they use and do not look on what is available, important to leave some for OS and MongoDB, i would say that use max 60-70% for OpenSearch and Graylog together and the rest for OS and MongoDB.

Start by doing the math, if we are using 8GB in our server we would calculate that 70% is 5,6GB. i will round it up to 6GB, i will use a 50/50 approach and give each 3GB to use instead of 1GB that is standard for each service.

Start by editing OpenSearch and change both -Xms1g and -Xmx1g from 1 to 3 and save.

New Values.

# Xms represents the initial size of total heap space

# Xmx represents the maximum size of total heap space

-Xms3g

-Xmx3g

Now edit graylog settings, change same settings in the following line and save.

# Default Java options for heap and garbage collection.

GRAYLOG_SERVER_JAVA_OPTS="-Xms3g -Xmx3g -server -XX:+UseG1GC -XX:-OmitStackTraceInFastThrow"

When that is done restart each service.

OpenSearch

GrayLog

Now your done and have increased memory for openSearch & Graylog.

Conclusion

There are more options to configure in graylog but the aim of this how-to was to get you started on log gathering and take you a step closer to actively see the use of having all logs in the same place.

In graylog you can create queries that combine log data from different sources to get a greater view of things happening.

I use graylog daily since i have chosen to build a secure network by default, where my different server networks are closed by default and i only open to what are really needed so no IoT device or server are allowed to speak freely out to internet, this is because many IoT manufacturer use service like to use Amazon AWS, Fastly, Akami and so for their servers and not knowing where my data is going, that is not an option for me.

i hope that you hade some usage of this how-to and that it got you started in getting control of your logs.